Introduction

No engineered system exists in isolation.

The moment it is used, it becomes part of human behavior—shaped by habits, shortcuts, errors, and intent.

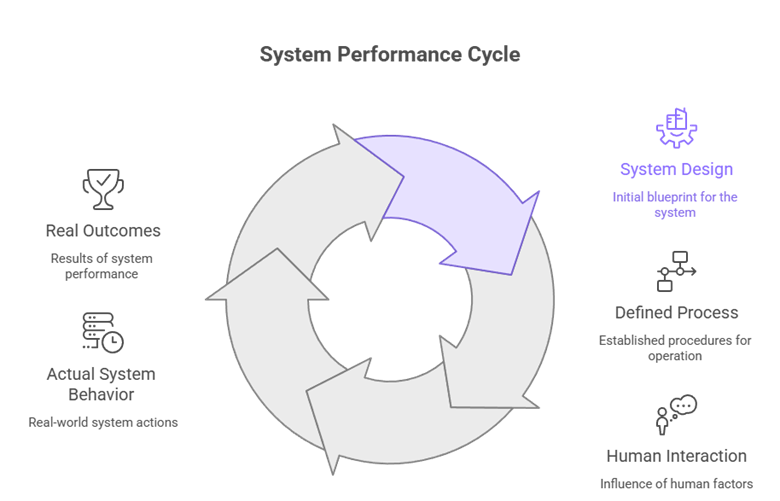

Systems Are Used, Not Just Designed

In theory, systems operate exactly as defined. In practice, they operate as people use them.

Engineers often design for correctness—assuming users will follow instructions, respect constraints, and behave predictably. But real environments are different. People adapt systems to their needs, sometimes in ways never intended by the designer.

A machine may be designed for precision, but an operator under time pressure may prioritize speed. A process may include safety steps, but a fatigued worker may skip them. These are not rare exceptions—they are normal human responses.

A practitioner engineer understands that the real system includes the human, not just the hardware or software.

Why Human Behavior Changes Outcomes

Human behavior introduces variability that cannot be eliminated—only anticipated.

People:

- take shortcuts when systems are slow

- make mistakes when overloaded

- develop habits over time

- creatively bypass restrictions to achieve goals

This means that even a perfectly engineered system can produce poor outcomes if it does not align with how people actually behave.

For example, a safety interlock may be bypassed if it interrupts workflow too frequently. A complex interface may lead users to ignore critical information. A rigid process may be abandoned entirely if it feels impractical.

The system does not fail because it is technically wrong—it fails because it does not fit human reality.

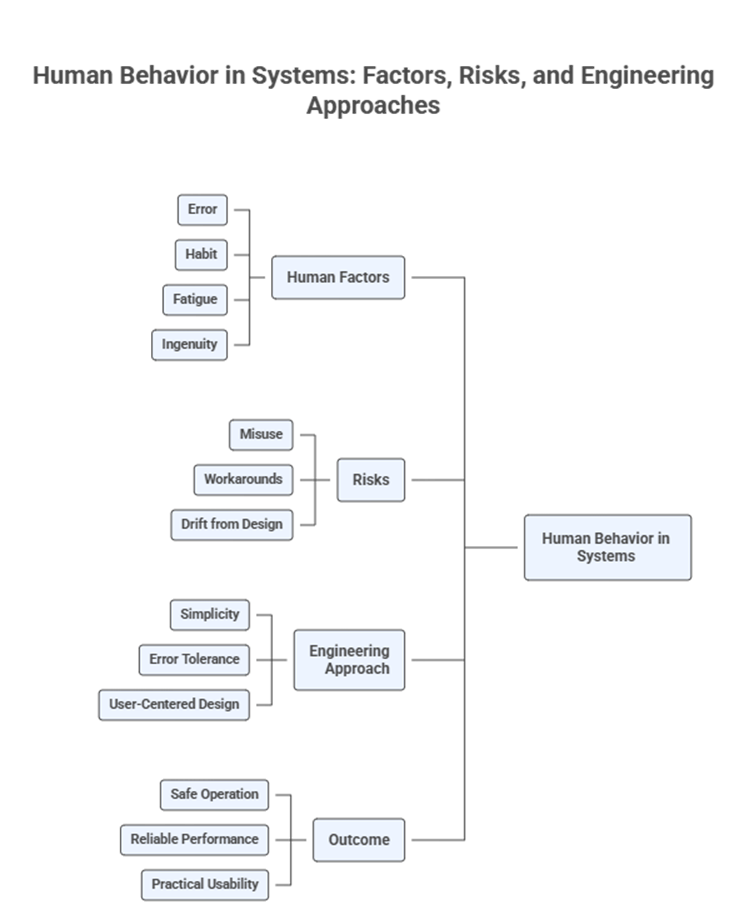

Hidden Risks in Ignoring Behavior

When human behavior is not considered, risks do not disappear—they shift into unpredictable forms.

These risks often include:

- misuse of systems in unintended ways

- silent workarounds that bypass safety or control

- gradual drift from designed procedures

- over-reliance on user discipline instead of system design

The most dangerous aspect is that these issues often remain invisible until failure occurs. On paper, everything appears correct. In reality, the system is operating differently.

A practitioner engineer assumes that if a system can be misused, it eventually will be.

Designing for Real Behavior

Good engineering does not try to eliminate human behavior—it works with it.

This requires designing systems that:

- are simple to use under pressure

- make correct actions easier than incorrect ones

- tolerate minor errors without catastrophic outcomes

- guide users rather than rely on perfect compliance

For example, clear visual indicators reduce cognitive load. Automation can remove repetitive tasks that lead to fatigue. Physical constraints can prevent incorrect assembly.

The goal is not to force perfect behavior, but to make safe and effective behavior the natural path.

The Role of Fatigue, Habit, and Ingenuity

Human behavior is shaped over time and under conditions.

Fatigue reduces attention and increases error rates. Habit creates consistency, but also resistance to change. Ingenuity allows people to solve problems—but also to bypass system limitations.

These factors mean that systems evolve after deployment. Users find faster ways, easier paths, and alternative methods. Some of these improve efficiency. Others introduce risk.

A system that ignores these dynamics will gradually drift away from its intended design.

A system that anticipates them will remain stable even as human behavior adapts.

Visual Representation

Practical Table

| Factor / Question | Why It Matters | Example |

| How will users actually behave? | Real behavior often differs from intended use | Skipping steps to save time |

| What happens under pressure? | Stress changes decision-making | Ignoring warnings during urgent operations |

| Can the system be bypassed? | Workarounds will be created if systems are restrictive | Disabling safety features |

| Is the system intuitive? | Complexity increases misuse | Confusing interface leading to incorrect inputs |

| How does fatigue affect use? | Long usage reduces attention and accuracy | Operator errors in extended shifts |

Key Takeaways

- Systems do not operate independently—they interact with human behavior

- Human variability is predictable in pattern, even if not in detail

- Ignoring behavior leads to misuse, workarounds, and hidden risks

- Good design aligns with natural human actions, not ideal behavior

- Fatigue, habit, and ingenuity continuously reshape system use

Conclusion

A system is never just a collection of components—it is a relationship between design and human behavior.

Ignoring this relationship creates a gap between how a system is supposed to work and how it actually works. That gap is where failures emerge.

A practitioner engineer does not assume disciplined users or perfect conditions. Instead, they design with the understanding that people will adapt, improvise, and sometimes make mistakes.

The goal is not to control behavior completely, but to shape the environment in which behavior occurs.

Because in the real world, the success of a system is not defined by its design alone— but by how well it performs in the hands of imperfect humans.